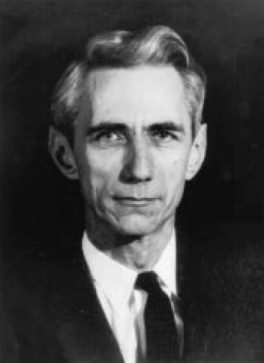

Many Jugglers have heard about the juggling robot invented by Claude Shannon, and jugglers of a mathematical bent will know of Shannon’s juggling theorem. However, among mathematicians and computer scientists, Claude Shannon is a legend, widely recognized as one of the most brilliant men of the twentieth century. It is impossible to overstate his importance in the early development of computers and digital communication. In 1990, Scientific American called his paper on information theory, “The Magna Carta of the Information Age.”

Many Jugglers have heard about the juggling robot invented by Claude Shannon, and jugglers of a mathematical bent will know of Shannon’s juggling theorem. However, among mathematicians and computer scientists, Claude Shannon is a legend, widely recognized as one of the most brilliant men of the twentieth century. It is impossible to overstate his importance in the early development of computers and digital communication. In 1990, Scientific American called his paper on information theory, “The Magna Carta of the Information Age.”

Shannon and information theory

With the encouragement of Vannevar Bush, Shannon decided to follow up his master’s degree with a doctorate in mathematics-a task that he completed in a mere year and a half. Not long after receiving this degree in the spring of 1940, he joined Bell Labs. Since U.S. entry into World War II was clearly just a matter of time, Shannon immediately went to work on military projects such as antiaircraft fire control and cryptography (code making and breaking).

Nonetheless, Shannon always found time to work on the fundamental theory of communications, a topic that had piqued his interest several years earlier. “Off and on,” Shannon had written to Bush in February 1939, in a letter now preserved in the Library of Congress archives, “I have been working on an analysis of some of the fundamental properties of general systems for the transmission of intelligence, including telephony, radio, television, telegraphy, etc.” To make progress toward that goal, he needed a way to specify what was being transmitted during the act of communication.

Building on the work of Bell Labs engineer Ralph Hartley, Shannon formulated a rigorous mathematical expression for the concept of information. At least in the simplest cases, Shannon said, the information content of a message was the number of binary ones and zeroes required to encode it. If you knew in advance that a message would convey a simple choice-yes or no, true or false-then one binary digit would suffice: a single one or a single zero told you all you needed to know. The message would thus be defined to have one unit of information. A more complicated message, on the other hand, would require more digits to encode, and would contain that much more information; think of the thousands or millions of ones and zeroes that make up a word-processing file.

As Shannon realized, this definition did have its perverse aspects. A message might carry only one binary unit of information-“Yes”-but a world of meaning-as in, “Yes, I will marry you.” But the engineers’ job was to get the data from here to there with a minimum of distortion, regardless of its content. And for that purpose, the digital definition of information was ideal, because it allowed for a precise mathematical analysis. What are the limits to a communication channel’s capacity? How much of that capacity can you use in practice? What are the most efficient ways to encode information for transmission in the inevitable presence of noise?

Judging by his comments many years later, Shannon had outlined his answers to such questions by 1943. Oddly, however, he seems to have felt no urgency about sharing those insights; some of his closest associates at the time swear they had no clue that he was working on information theory. Nor was he in any hurry to publish and thus secure credit for the work. “I was more motivated by curiosity,” he explained in his 1987 interview, adding that the process of writing for publication was “painful.” Ultimately, however, Shannon overcame his reluctance. The result: the groundbreaking paper “A Mathematical Theory of Communication,” which appeared in the July and October 1948 issues of the Bell System Technical Journal.

Shannon’s ideas exploded with the force of a bomb. “It was like a bolt out of the blue,” recalls John Pierce, who was one of Shannon’s best friends at Bell Labs, and yet as surprised by Shannon’s paper as anyone. “I don’t know of any other theory that came in a complete form like that, with very few antecedents or history.” Indeed, there was something about this notion of quantifying information that fired peoples’ imaginations. “It was a revelation,” says Oliver Selfridge, who was then a graduate student at MIT. “Around MIT the reaction was, Brilliant! Why didn’t I think of that?”

Claude Shannon the juggler

I first met Claude at the MIT Juggling club. One nice thing about juggling at MIT is that you never know who will show up. For example, one day Doc Edgerton, inventor of the strobe light, stopped by the juggling club and asked if he could photograph some of us juggling under strobe lights. So it wasn’t a great surprise when a cheerful, gray haired professor stopped by the club one afternoon and said to me, “Can I measure your juggling?” That was my introduction to Claude Shannon.

Unlike most brilliant theoretical mathematicians, Claude was also wonderfully adept with tools and machines, and frequently built little gadgets and inventions, usually with the goal of being whimsical rather than practical. “I’ve always pursued my interests without much regard to financial value or value to the world. I’ve spent lots of time on totally useless things,” Shannon said in 1983. These useless things would include his juggling robot, a mechanical mouse that could navigate a maze, and a computing machine that did all its calculations in roman numerals.

Claude never did care about money. He never even put his paycheck into a bank account that paid interest, until he married and his wife Betty suggested it to him. Still, he became a very wealthy man, partly as a result of early investments with some of his computer scientist pals, including the founders of Teledyne and of Hewlett Packard. When he did think about finance, Claude was as brilliant at that as with anything else he set his mind to. Knowing I was an economist, he once explained to me his thoughts on investing. Some were wonderfully practical, as when he said he’d always buy stocks rather than gold, because companies grow and metals don’t. Some were more esoteric, for example, he had ideas regarding mean-variance analysis that jibe well with many aspects of modern portfolio theory.

Claude told me this story. He may have been kidding, but it illustrates both his sense of humor and his delightfully self deprecating nature, and it certainly could be true. The story is that Claude was in the middle of giving a lecture to mathematicians in Princeton, when the door in the back of the room opens, and in walks Albert Einstein. Einstein stands listening for a few minutes, whispers something in the ear of someone in the back of the room, and leaves. At the end of the lecture, Claude hurries to the back of the room to find the person that Einstein had whispered too, to find out what the great man had to say about his work. The answer: Einstein had asked directions to the men’s room.

Claude wrote the first paper describing how one might program a computer to play chess. He wrote, “Communication Theory of Secrecy Systems, ” which the Boston Globe newspaper said “transformed cryptography from an art to a science.” Yet neither one of these were his greatest works.

Here’s my own interpretation of Claude’s two most famous and important papers. His 1937 thesis basically said, “if we could someday invent a computing machine, the way to make it think would be to use binary code, by stringing together switches and applying Boole’s logic system to the result.” This work, done while he was still a student at MIT, has been called the most important master’s thesis of the twentieth century. The idea was immediately put to use in the design of telephone switching systems, and is indeed how all modern computers think.

But that was only Claude’s second most important idea. His most famous paper, written in 1948 at Bell Labs, created what is now known as information theory. In “A Mathematical Theory of Communication,” Shannon proposed the idea of converting any kind of data, (such as pictures, sounds, or text) to zeroes and ones, which could then be communicated without errors. Data are reduced to bits of information, and information transmission is then measured in terms of the amount of disorder or randomness the data contains (entropy). Optimal communication of data is achieved by removing all randomness and redundancy (now known as the Shannon limit). In short, Claude basically invented digital communication, as is now used by computers, CD’s, and cell phones. In addition to communications, fields as diverse as computer science, neurobiology, code breaking, and genetics have all been revolutionized by the application of Shannon’s information theory. Without Claude’s work, the internet as we know it could not have been created.

One day, almost immediately after I’d arrived at his house, Claude said to me, “Do you mind if hang you upside down by your legs?” He had realized that while bounce juggling is much easier than toss juggling in terms of energy requirements, throwing upward as in toss juggling is physiologically easier, and so he wanted to try combining the two, which meant bounce juggling while hanging upside down.

For every one invention he built or theorem he proved, he had a hundred other ideas that he just never got around to finishing. One juggling example: He showed me a vacuum cleaner strapped to a pole, pointing straight up, with the motor reversed to blow instead of suck. He turned it on, and placed a styrofoam ball in the wind current. It hovered about a foot above the vacuum. He then varied the speed of the motor, and the ball drifted up and down as the speed changed. “Now,” he said, “Imagine three balls and two blowers, with the blowers angled a bit towards each other, and the motors timed to alternate speeds.”

The last time I saw Claude, Alzheimer’s disease had gotten the upper hand. As sad as it is to see anyone’s light slowly fade, it is an especially cruel fate to be suffered by a genius. He vaguely remembered I juggled, and cheerfully showed me the juggling displays in his toy room, as if for the first time. And, despite the loss of memory and reason, he was every bit as warm, friendly, and cheerful as the first time I met him. Billions of people may have benefited from his work, but I, and thousands of others who knew him a little bit, are eternally grateful to have known him as a person.